Schema Detection

Schema detection in Validio automatically derives a schema for every source through metadata reading, inference, or manual configuration, ensuring accurate validation without manual setup.

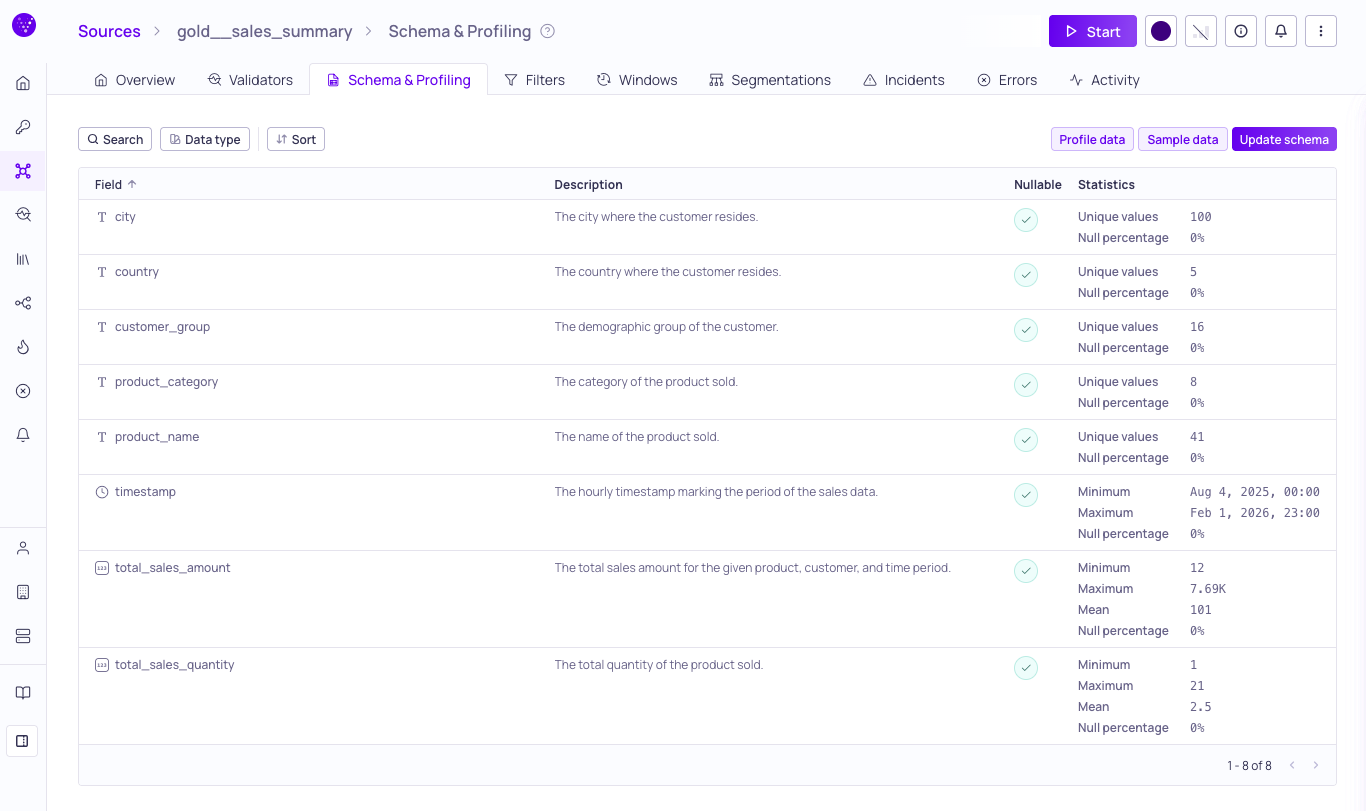

Source schema list with profiling results

Validio automatically derives a schema for every source using two primary methods: metadata reading and schema inference. This ensures you data validation rules are built on accurate schema information without manual setup.

- Schema from metadata: For most structured data types, Validio reads the schema from the data source metadata. For example, reading from

INFORMATION_SCHEMAin a data warehouse. - Schema from inference: When predefined schema don't exist, such as for JSON or other semi-structured data types, Validio infers the schema from the existing data patterns.

- Manual configuration: Depending on the data source and types, you can manually configure the schema by selecting fields, including nested fields, for validation.

Supported Complex Data Types

In addition to structured data, Validio supports semi-structured and other complex data types. You can select these fields or nested fields when you configure a source.

Data Warehouses

| Source system | Supported complex data types |

|---|---|

| Athena | ARRAY, MAP, STRUCT |

| ClickHouse | Tuple, Named Tuple, Nested, Array. |

| Databricks | STRUCT, ARRAY, VARIANT |

| Google BigQuery | JSON, ARRAY, STRUCT |

| PostgreSQL | JSON, JSONB, Array |

| Redshift | SUPER |

| Snowflake | ARRAY, OBJECT, VARIANT |

Data Streams

| Source system | Supported complex data types |

|---|---|

| Kafka | JSON, Protobuf, Avro |

| Kinesis | JSON, Protobuf, Avro |

| Pub/Sub | JSON, Protobuf, Avro |

JSONPath Expressions and Arrays

Currently Validio does not support data validation within an array. However, you can validate the size of an array.

Validio uses JSONPath expressions to represent data structures. For each array, Validio adds a computed numeric field named

some_array.length()to represent the size of an array.

Understanding Nullable Fields

If the automatically inferred schema doesn't match your expectations for incoming data, you can modify the nullability settings and data types to better reflect your data structure.

- Check the

Nullableoption to include datapoints withNULLvalues in validation. - When unchecked, datapoints with

NULLvalues in that field will be excluded from validator metrics.

This gives you control over how missing or null data affects your validation results.

When Automatic Detection Fails

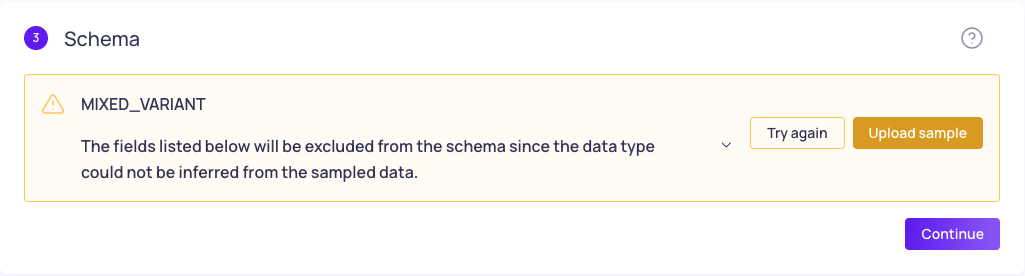

Schema detection error with option to Upload sample data file.

Schema inference may encounter issues in these scenarios:

- Large tables: Timeout occurs when source tables are too large to process efficiently

- Unknown data types: Cannot determine appropriate data types for schema fields

- Mixed data types: Semi-structured data with inconsistent data types across rows

Upload a JSON sample

When automatic detection fails, upload a JSON sample file to help Validio understand your schema structure.

Sample data format:

{

"date": "2025-01-01",

"user_id": 123,

"user_profile": {

"age": 20,

"name": "Bob"

}

}Case Sensitivity: The properties (fields and values) used in the uploaded sample data file must match the case conventions of your data source. For example, Snowflake defaults to uppercase, so your sample data should use uppercase field names.

Schema Change Validation

Validio automatically validates schema changes for structured data in data warehouses and object storage files. Validio runs schema checks hourly, and reports detected changes as incidents for immediate attention. For more information about handling incidents, see About Validator Incidents.

Updated 3 months ago